Critical software systems are essential to our society. They help ensure the safety of everything from railway networks to nuclear power plants. Software malfunctions can have grave consequences for our infrastructures, the environment, and individuals. Software can only be implemented in these critical environments if there is a high degree of trust that it will run as intended. The study of programming languages—a branch of computer science—focuses on developing new ways of designing and coding software to make it safer.

Neural networks are now making inroads into critical software, and the rules of the game have changed. The way neural networks are designed makes them difficult to describe formally and, therefore, to verify. Python, the most widely used programming language for neural network design, is very difficult to analyze. To further complicate matters, neural networks are made up of series of very large algebraic calculations with no obvious link to the result produced. What this means is that, in terms of their characteristics, neural networks are very different from the conventional software the verification community is accustomed to working with.

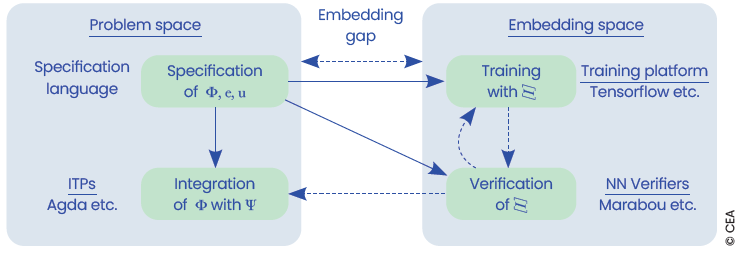

Our research began with a description of the effects of neural network verification on program verification tools. We made two crucial observations:

This enabled us to propose description languages suitable for this approach.

The specification of neural networks is an important research topic that must be addressed at the fundamental level of the programming language.