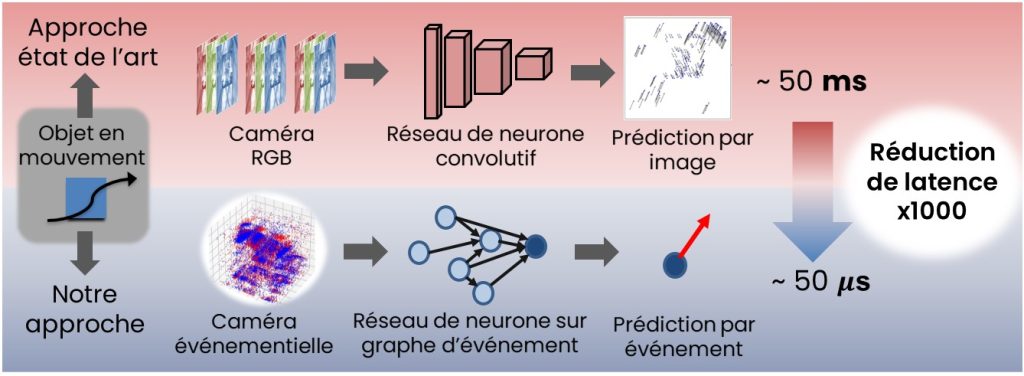

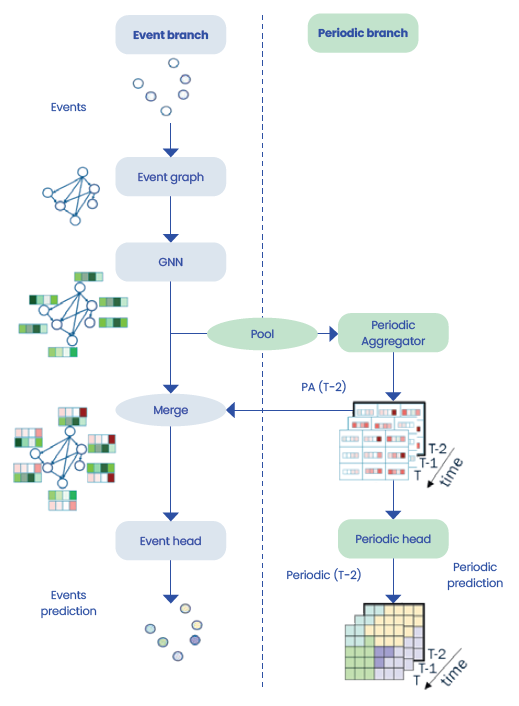

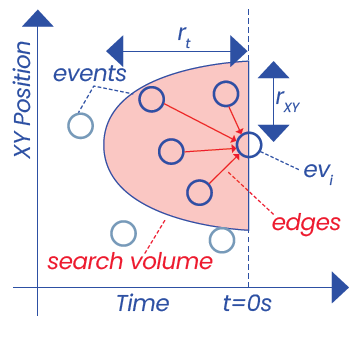

High temporal accuracy is a prerequisite for computer vision, especially for applications like autonomous driving and video stabilization. Conventional RGBimage-based methods present major limitations in terms of computational cost and image acquisition frequency. With their high time resolution and asynchronous operation, event-driven cameras are a promising alternative. Currently, however, event-driven cameras rely on convolutional neural networks to recreate images from events, cancelling out any latency advantages and making the solution too computationally costly for embedded systems.

|

produced by PROPHESEE |

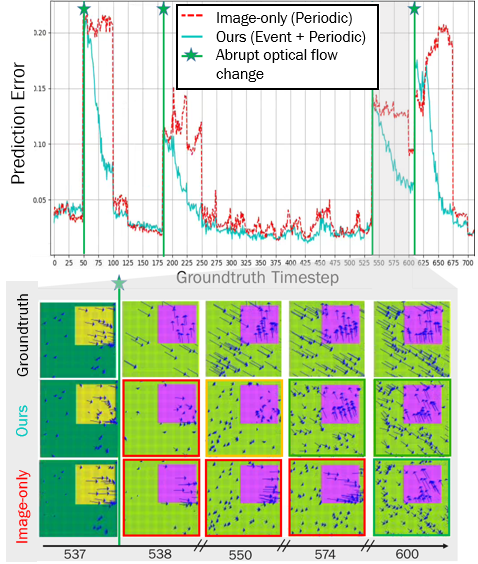

Our approach turned out to be 1,000 times faster than conventional solutions, reducing speed change detection latency down to a prediction-per-event time of just 50 μs on embedded hardware. In addition, it requires 17 times fewer parameters and 48 times fewer operations per second, while maintaining competitive accuracy levels.

Our approach marks a step toward low-latency, energy-efficient smart sensors for embedded vision.