Understanding the challenge: adapting to heterogeneous data from multiple sources

Multi-source domain adaptation (MSDA) addresses the key challenge of enabling a model to utilize multiple heterogeneous data sources to generalize to an unannotated target domain. In many industrial applications, not only does data vary from location to location, but it is also often impossible to centralize. This creates a distribution mismatch between source and target that severely impedes the performance of conventional models, which are generally based on an assumption of homogeneous data that is rarely found in real-world situations.

The limitations of conventional federated learning: a central server that weakens the entire system

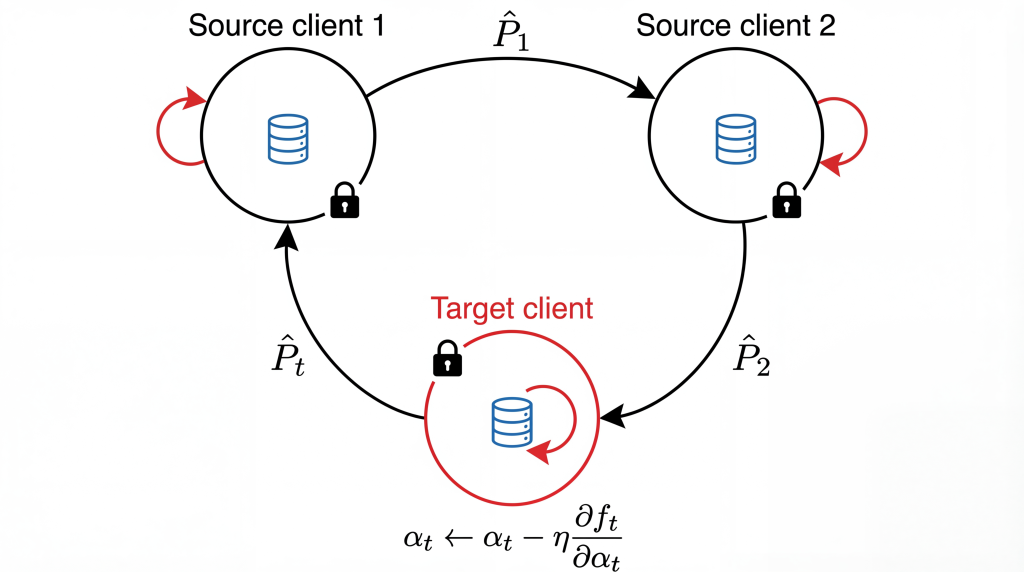

Recent approaches using federated learning have attempted to adapt MSDA to the distributed context, while protecting data privacy. However, virtually all these approaches involve the use of a central server to aggregate and synchronize local models, introducing weaknesses like dependence on a single point, increased cybersecurity risk, and high data transmission costs.

Our innovation: totally peer-to-peer learning

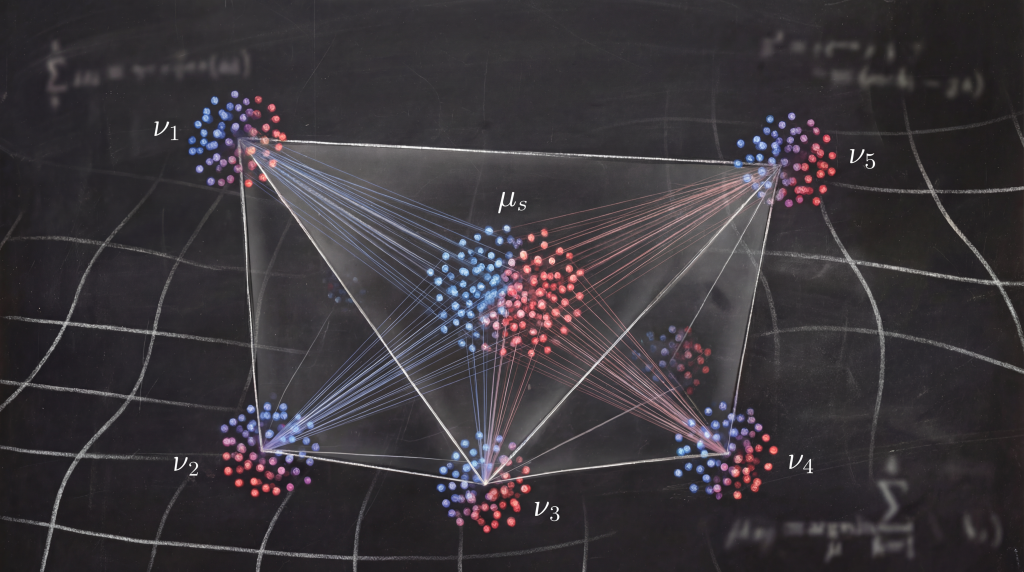

De-FedDaDiL eliminates the central server. Each client stores its own dictionary of atoms (the fundamental elements used to model distributions) locally and exchanges this information with a randomly-chosen peer at regular intervals. The atoms received are merged with those of the client via a simple but effective aggregation process and then optimized locally. The barycentric coordinates, which encode how each domain projects onto the atoms, are never shared, guaranteeing enhanced privacy (Figure 1).

Performance that rivals the best federated methods

De-FedDaDiL was tested on the ImageCLEF, Office-31, and Office-Home datasets, where it performed on par with (and, in some cases, better than) the centralized federated version. On Image CLEF, it even outperformed all the methods tested. The results of these tests indicate a 2% reduction in performance compared to server-based approaches, while cutting the number of data exchanges in half. An analysis of the convergence between clients showed a gradual decrease in the Wasserstein distance between barycenters. This confirms natural alignment despite the totally peer-topeer approach with no central server.