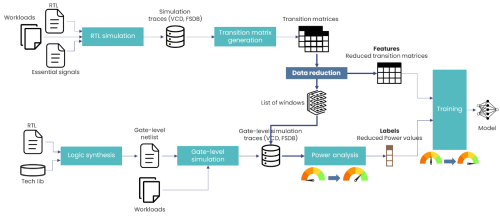

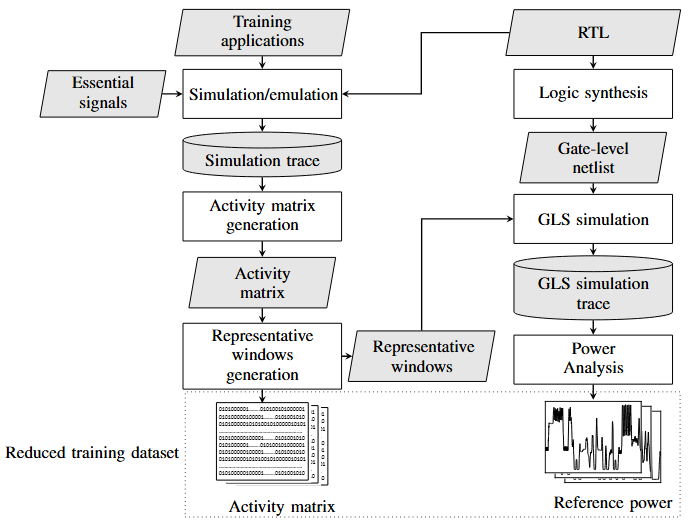

The purpose of the proposed methodology (Figure 1) is to leverage a combination of machine learning and a drastic reduction in the size of training datasets to speed up power consumption modeling for digital architectures.

Traditionally, these models are generated using datasets that contain RTL traces describing the circuit’s internal activity and power consumption data at logic-gate level obtained using commercial power analysis tools. However, logic-gate simulations are cumbersome, and generating the corresponding power consumption profiles is costly. Our methodology overcomes these two major bottlenecks.

We used k-means clustering to automate the selection of representative windows. First, RTL traces are segmented into fixed-size windows and then aggregated to enable robust clustering on complex time series.

The analysis is thus restricted to a small but informative subset of runtime segments. The gate-level simulations and corresponding power calculations are based on this subset. The amount of data to process is drastically reduced, but the behavioral diversity needed for learning is preserved.

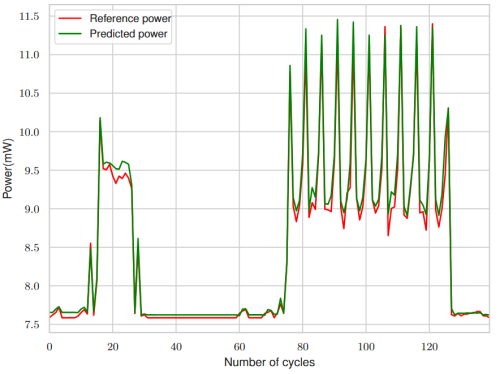

Experimental results, obtained on a RISC-V Rocket core and a masked AES, show significant gains: Power consumption analysis time was reduced by up to 28 times and model training time was reduced by up to 49 times. The average prediction error was around 5%, despite the compressed data. As an example, Figure 2 shows a cycle-by-cycle comparison of a segment of predicted power (green) and reference power (red) for a masked AES.

faster data generation.

faster training of power models while maintaining a prediction error rate of around 5%.

Our AI-assisted approach, which optimizes the selection of training data, significantly reduces simulation requirements without sacrificing the accuracy of power consumption models. This advance will pave the way toward faster, more scalable modeling.